The Gap Nobody Talks About: 92% of Companies Want AI But Only 7% Have the Skills to Execute

92% of companies are increasing AI investments, yet only 7% have successfully scaled AI across the enterprise. Here's the real reason most organizations are stuck in pilot purgatory — and the engineering skills defining the future of AI.

The Gap Nobody Talks About: 92% of Companies Want AI But Only 7% Have the Skills to Execute

“The real AI bottleneck isn’t models anymore. It’s engineering maturity.” — AuraDevs , 2026

There’s a number that should be making headlines every week but somehow isn’t.

According to McKinsey’s 2025 State of AI report, 92% of companies plan to increase AI investments between now and 2027. Nearly every boardroom, every product roadmap, every engineering all-hands has “AI” somewhere near the top.

And yet only 7% of companies have fully scaled AI across their enterprise. McKinsey goes further: just 1% of leaders consider their company truly “AI mature,” meaning AI is fully integrated into workflows and driving substantial business outcomes.

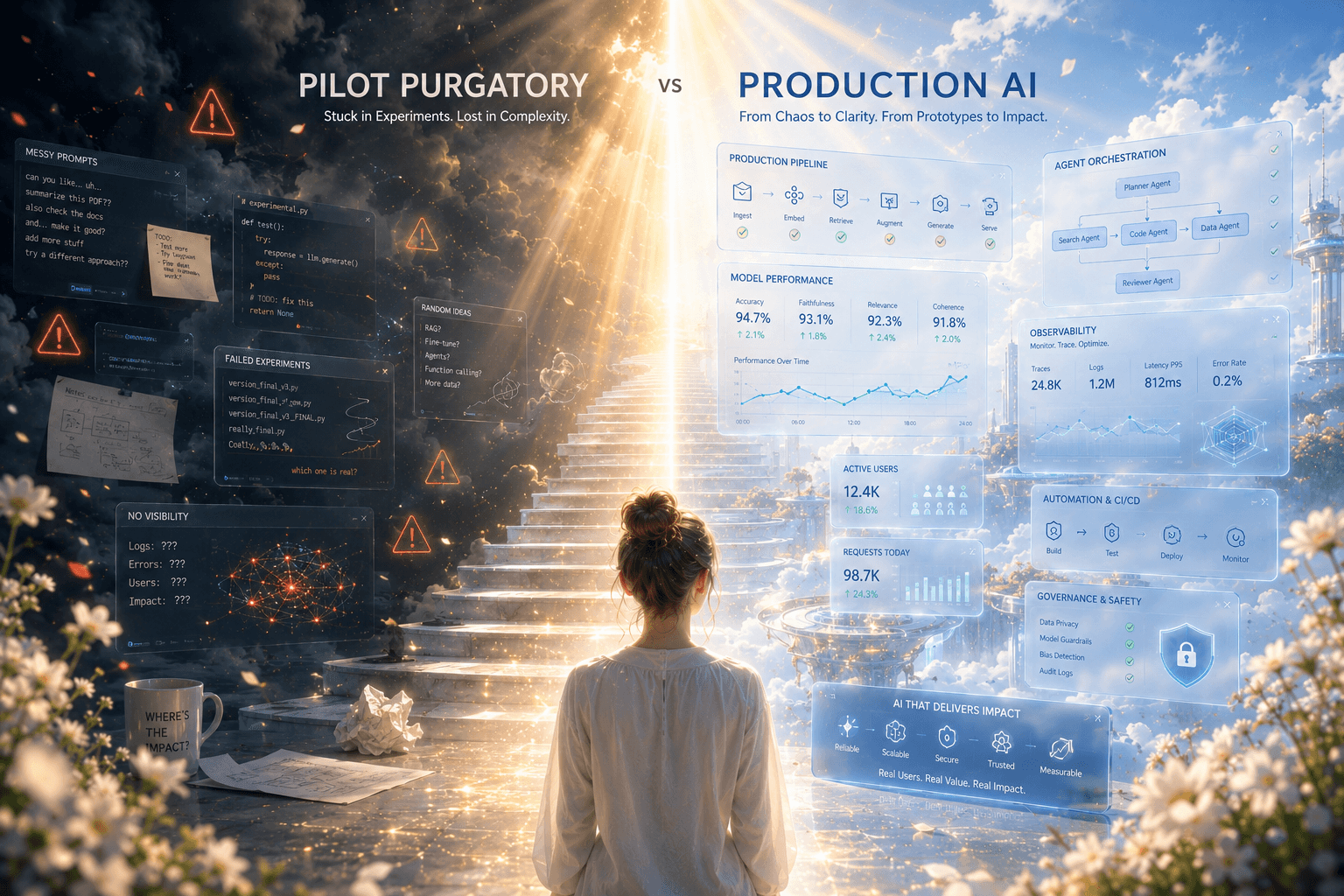

The other 93% are stuck. Experimenting, piloting, planning, promising. But not shipping.

This isn’t a model problem. The models are good. It’s not a budget problem either; enterprises are spending billions. It’s a skills problem, and it’s deeper and more structural than most people want to admit. This post breaks down exactly what the gap looks like technically, why it exists, and what developers and engineers can do about it right now.

The Numbers Behind the Gap

Before diagnosing the problem, it helps to understand its full shape.

On the demand side:

- 91% of businesses report using AI in at least one capacity in 2026, up from 78% in 2024 and 55% in 2023

- AI job postings are 134% above 2020 levels; in the US alone, 275,000 job postings required AI skills in January 2026

- Companies focused on training large-scale AI models saw a 92% year-over-year increase in headcount (LinkedIn, January 2026)

- The number of workers in occupations where AI fluency is explicitly required has grown sevenfold in just two years (McKinsey)

On the supply side:

- Only 9% of organizations have achieved AI maturity, despite near-universal adoption

- 62% of organizations are stuck in the experimentation phase, the so-called “Pilot Purgatory”

- More than half the global workforce (56%) reports receiving no recent AI training (ManpowerGroup Global Talent Barometer 2026)

- 57% of workers lack access to mentorship opportunities for AI skill development

- Only 28% of organizations plan to invest in upskilling programs over the next two to three years

The gap isn’t between companies that want AI and companies that don’t. It’s between companies that have deployed AI tools and companies that have built the human infrastructure to actually use them.

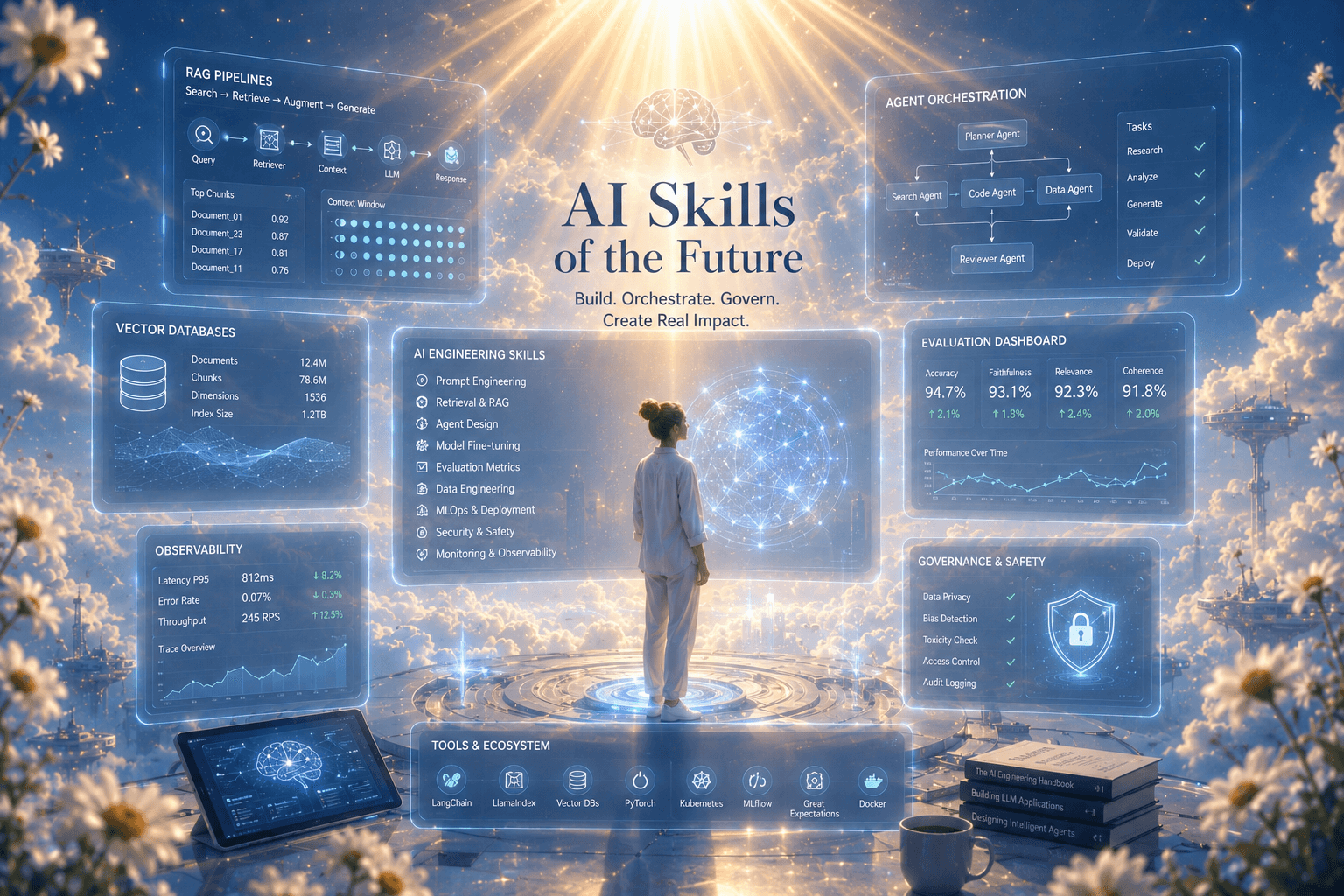

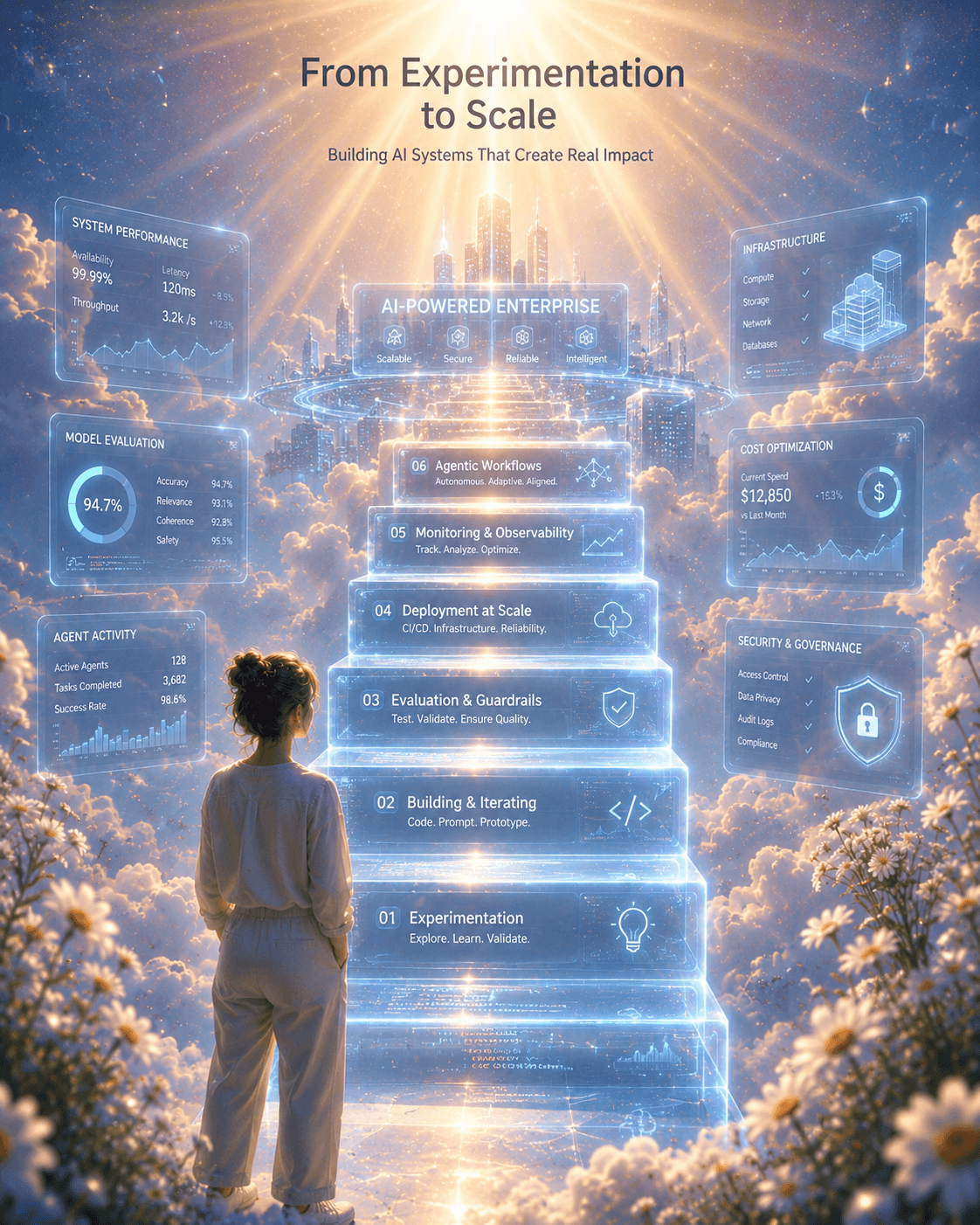

What “Fully Scaled AI” Actually Requires Technically

This is where most articles stop short. “Learn AI skills” is not actionable advice. Here’s what production-grade AI deployment actually demands from your technical team:

1. MLOps & AI Infrastructure Engineering

Deploying a model in a Jupyter notebook is not production. Getting it to production requires:

- Model versioning and registries (MLflow, Weights & Biases, DVC): tracking experiments, lineage, and reproducibility

- Feature stores (Feast, Tecton): centralized, low-latency feature serving at inference time

- CI/CD for ML: automated training pipelines, model validation gates, rollback strategies

- Monitoring and observability: detecting data drift, concept drift, and model degradation in real time using tools like Evidently AI, Arize, or Whylogs

- Containerization: packaging models with Docker, orchestrating inference services with Kubernetes

Most teams can build a model. Very few have all five of these in place simultaneously. That’s why deployment stalls.

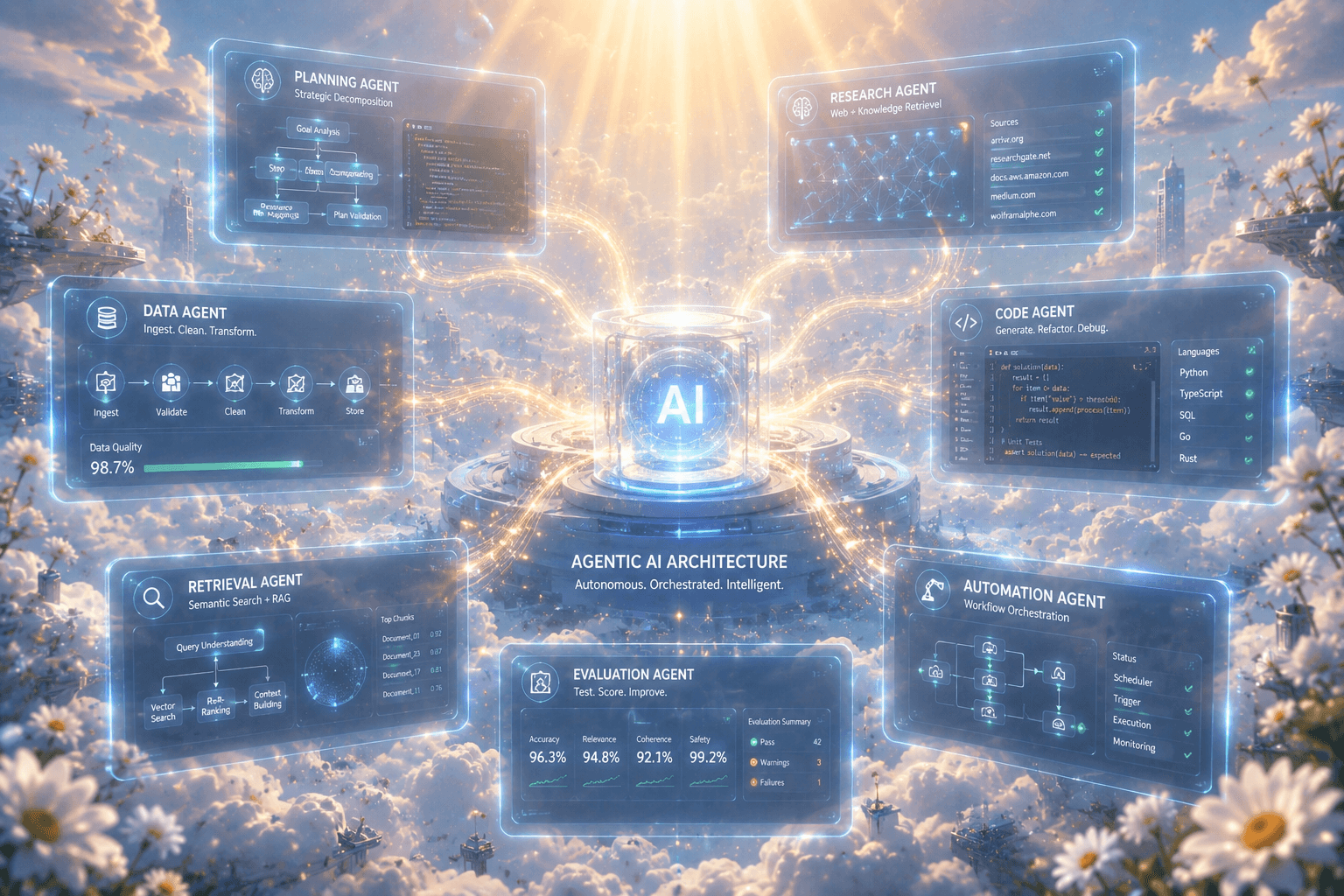

2. Agentic AI Architecture

This is the fastest-moving area of the entire stack right now. As of 2026, 40% of enterprise applications are projected to embed AI agents, yet only 11% of organizations have agents running in production.

Building reliable agentic systems requires fundamentally different engineering skills:

- Multi-agent orchestration: designing systems where multiple specialized agents collaborate on complex tasks (AutoGen, CrewAI, LangGraph)

- Tool-use design: defining clean, reliable tool schemas that agents can call without hallucinating parameters

- Retrieval-Augmented Generation (RAG): building vector pipelines (embedding, chunking, indexing, retrieval) using databases like Pinecone, Weaviate, or pgvector

- Memory architectures: short-term (context window), long-term (vector store), and episodic memory for agents that need to maintain state across sessions

- Human-in-the-loop (HITL) design: knowing precisely where autonomous execution is safe and where human review gates must be inserted

- Safety guardrails: prompt injection defense, output validation, and sandboxed tool execution

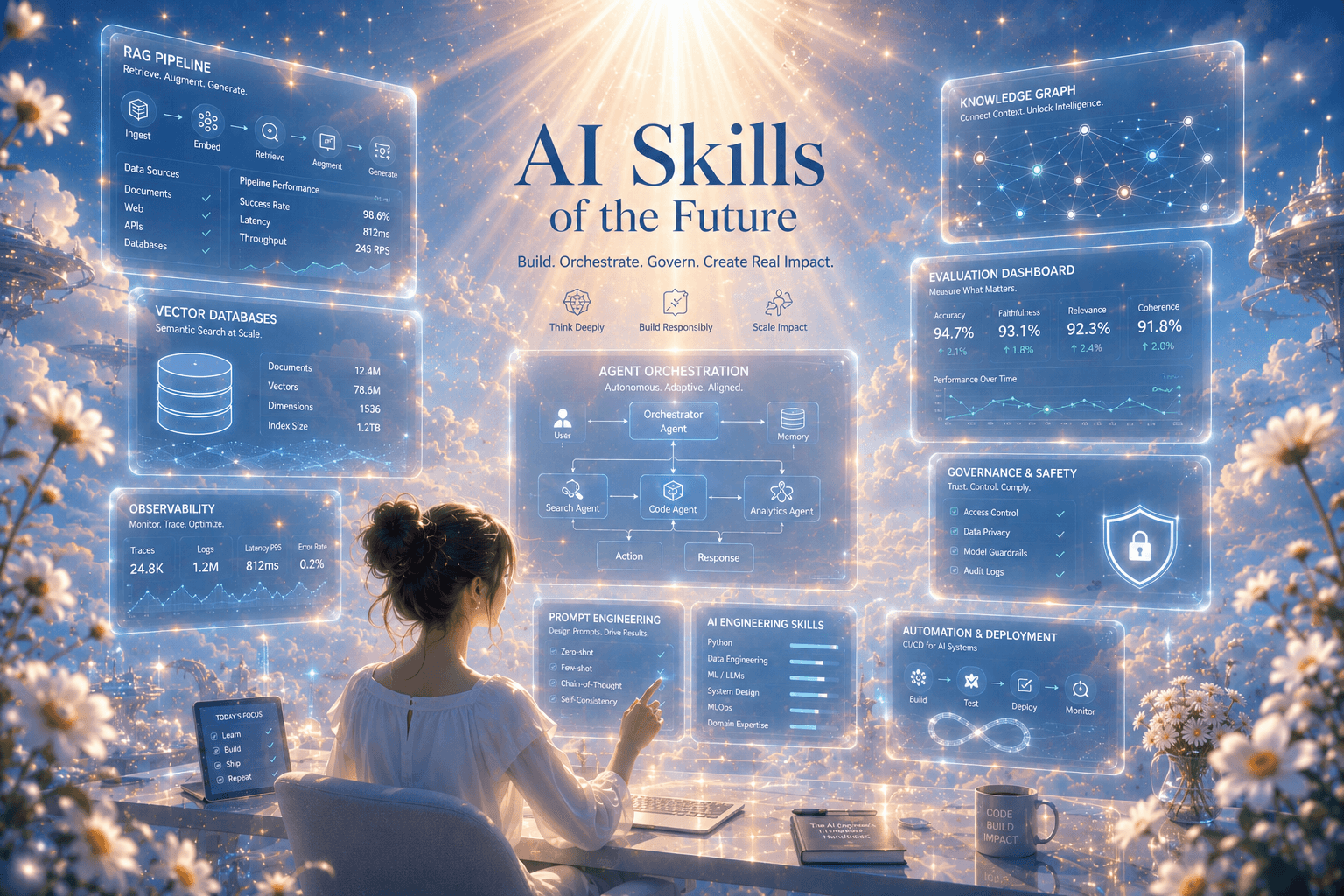

LangChain, RAG systems, vector databases, and multi-agent orchestration are now the skills LinkedIn identifies as most common among the fastest-growing AI roles. They’re also the skills with the smallest talent pool.

3. Prompt Engineering at the Systems Level

Individual prompt engineering, crafting a good prompt for personal use, is table stakes. Systems-level prompt engineering is a different discipline entirely:

- Prompt templating and versioning: treating prompts as code artifacts with version control (LangSmith, PromptLayer)

- Few-shot example management: curating, evaluating, and dynamically selecting in-context examples

- Chain-of-thought and tree-of-thought architectures: structuring multi-step reasoning for complex tasks

- Output parsing and structured generation: using Pydantic models, function calling, and JSON mode to enforce reliable output schemas

- Evals-driven development: building automated evaluation pipelines before deploying prompt changes (RAGAS, DeepEval, LangSmith evaluators)

This last point is where most teams fail. They ship prompt changes without evals, discover regressions in production, and lose trust in their AI systems.

4. AI Governance, Security, and Compliance

Demand for AI governance skills is up 150% in 2026, and it’s not because companies are excited about policy. It’s because they’ve shipped things that broke.

What this looks like technically:

- Responsible AI frameworks: bias detection pipelines, fairness metrics, disparate impact testing

- Data lineage and provenance tracking: knowing exactly what data trained your model and where it came from

- PII detection and redaction: preventing sensitive data from entering prompts or being surfaced in completions (Microsoft Presidio, Amazon Comprehend)

- Audit logging for AI decisions: structured logging of inputs, outputs, model versions, and decision rationale for regulatory review

- Red-teaming and adversarial testing: systematic prompt injection testing, jailbreak resistance evaluation

60% of executives say Responsible AI boosts ROI and efficiency, yet nearly half say turning those principles into operational processes has been a major challenge (PwC 2025).

5. Data Engineering for AI

Every AI system is only as good as the data feeding it. The talent gap here is acute:

- Data pipeline architecture: building reliable ingestion from heterogeneous sources (Kafka, Airflow, dbt)

- Vector database management: indexing strategies, metadata filtering, hybrid search (dense + sparse retrieval)

- Synthetic data generation: creating training and evaluation data when real data is scarce or sensitive

- Data quality and validation: Great Expectations, Soda, and similar frameworks to catch schema drift before it corrupts your model

- Embedding pipelines: chunking strategies, embedding model selection, and re-embedding when models are updated

Data scientists and data analysts are projected to see 414% growth in job demand, driven precisely by the recognition that AI without clean, well-structured data is useless.

Why the Gap Is Structural, Not Just Technical

The skills shortage isn’t simply “people haven’t learned the tools yet.” It’s structural for several compounding reasons:

Reason 1: The timeline mismatch

Enterprise AI adoption has accelerated faster than educational systems can respond. The most in-demand skills, agentic AI architecture, MLOps, AI governance, barely existed as coherent disciplines two years ago. No university curriculum has caught up. Most bootcamps haven’t either.

Reason 2: The training paradox

80% of tech-focused organizations say upskilling is the most effective way to reduce skills gaps (McKinsey). Yet only 28% are planning to invest in upskilling programs. Companies want the skills but won’t pay to build them internally, creating a standoff that keeps the gap open.

Reason 3: Pilot Purgatory is sticky

Getting from 0 to a working prototype with a modern LLM is genuinely easy. Getting from prototype to reliable production requires MLOps maturity, eval pipelines, monitoring, governance, and cultural change management. Most teams nail the first step and stall on everything after it. The 62% stuck in experimentation aren’t there because they haven’t tried. They’re there because the production gap is enormous and largely invisible until you hit it.

Reason 4: The confidence collapse

ManpowerGroup’s 2026 Global Talent Barometer found that regular AI usage jumped 13%, while confidence in using technology fell sharply by 18%. Workers are being given AI tools without training, using them ineffectively, and concluding the tools are the problem. This erodes organizational trust in AI initiatives and slows adoption further.

The Productivity Premium at Stake

For anyone wondering if closing this gap is worth the investment:

- Industries most exposed to AI saw 27% productivity growth from 2018–2024, versus 7% for less-exposed industries (PwC)

- GitHub Copilot users completed 126% more coding projects weekly in controlled experiments

- Programmers with AI assistance were 55.8% faster at implementing tasks (GitHub research)

- Companies with scaled AI report an average 11.5% net productivity increase over the past 12 months (Morgan Stanley)

- The 27% of AI power users who save over 9 hours per week are compounding that advantage daily

The productivity gap between organizations that have scaled AI and those stuck in pilot purgatory is already large. By 2027, it will be defining.

The 5 Skills With the Highest ROI Right Now

If you’re a developer trying to position for where the market is actually moving, here’s where to focus, ranked by demand growth and talent scarcity:

1. Agentic AI Development (LangGraph, CrewAI, AutoGen) Demand is concentrated in a tiny talent pool. Agent architects who can take multi-agent systems from POC to production are the single most sought-after profile in enterprise AI right now. Agentic AI Developer roles command a 15–20% salary premium over standard ML engineers.

2. RAG Pipeline Engineering RAG appears in the majority of enterprise AI deployments. Knowing how to build, evaluate, and optimize retrieval pipelines (chunking strategies, embedding selection, hybrid search, re-rankers) is a durable, high-value skill that won’t be abstracted away anytime soon.

3. MLOps & Model Observability Shipping models is easy. Keeping them working reliably in production (with drift detection, automated retraining triggers, and rollback capabilities) is hard. Engineers who can build and maintain this infrastructure are chronically undersupplied.

4. AI Evals Engineering Building automated evaluation pipelines is one of the least glamorous and most critical skills in the stack. Teams that can’t eval reliably can’t ship confidently. This is the engineering equivalent of writing good tests, undervalued until something breaks in production.

5. AI Governance and Compliance Engineering With AI regulation accelerating globally (EU AI Act, US executive orders, India’s emerging framework), organizations desperately need engineers who understand both the technical and regulatory dimensions of safe AI deployment. Demand for AI governance skills is up 150% year-over-year.

What This Means for Your Career

The 7% figure isn’t depressing. It’s directional.

It means the overwhelming majority of AI work in enterprises is yet to be done. It means the engineers who build the skills to close this gap are entering a market where demand dramatically outstrips supply. It means the salary premiums, the opportunities, and the career leverage sit squarely on the production-deployment side of the stack, not on the “I can write a GPT wrapper” side.

The path forward is specific:

Stop experimenting, start deploying. Build systems that handle real data, real failure modes, and real production constraints. A RAG pipeline that you’ve taken through evaluation, versioning, and monitoring teaches you more than ten prototypes.

Learn evals before you learn anything else. The ability to measure whether your AI system is working correctly is the foundation of everything else. Without it, you’re flying blind.

Specialize in one layer of the stack deeply. Agentic orchestration, MLOps, data pipelines, governance: pick one, go deep, become the person your team calls when that layer breaks.

Build in public. 60% of AI engineering hires come through referrals and portfolios. A GitHub repository showing a production-grade RAG system with eval pipelines is worth more than any certification.

The gap is real. The window to close it, for your organization and your career, is open right now. But it won’t stay open indefinitely.

Found this useful? Follow for more deep-dives on AI engineering, developer careers, and what’s actually happening in the tech industry, not just what sounds good in a press release.

Share this insight

Join the conversation and spark new ideas.